Replaces PR #3493's blanket fatal abort with a "tell the model + throttle the bypass loop" policy. Workspace-bound rejections are now ordinary recoverable tool errors enriched with a structured "this is a hard policy boundary" instruction; SSRF stays the only marker that aborts the turn. Why the fatal-abort approach broke ---------------------------------- PR #3493 promoted every shell `_guard_command` and filesystem path-resolution rejection to a turn-fatal RuntimeError. Two of those messages (`path outside working dir` and `path traversal detected`) are heuristic substring scans on the raw command, so legitimate commands like `rm <ws>/x.txt 2>/dev/null` or `find . -type f` killed the user's turn (#3599). On channels with outbound dedupe (Telegram) the user just saw silence (#3605), and the noise polluted the LLM's context until it started hallucinating guard rejections on plain relative paths (#3597). Why we still need *some* throttle --------------------------------- The original #3493 pain point was real: the LLM, refused once, would swap tools and try again -- read_file -> exec cat -> exec cp -> bash -c -> ln -sf -> python -c open(...). Just removing the fatal escape lets that loop run wild until max_iterations. What this commit does --------------------- - `nanobot/utils/runtime.py`: add `workspace_violation_signature` and `repeated_workspace_violation_error`. The signature normalizes filesystem `path` arguments and the first absolute path inside an exec command, so swapping tools against the same outside target hits the same throttle bucket. Two soft attempts are allowed; the third attempt's tool result is replaced with a hard "stop trying to bypass" message that quotes the target path and tells the model to ask the user for help. - `nanobot/agent/runner.py`: split classification into `_is_ssrf_violation` (still fatal) and `_is_workspace_violation` (now soft). All three failure branches in `_run_tool` (prep_error / exception / Error result) route through a shared `_classify_violation` that bumps the per-turn workspace_violation_counts dict and either keeps the tool's own message or substitutes the throttle escalation. `_execute_tools` now threads that dict alongside the existing external_lookup_counts. - `nanobot/agent/tools/shell.py`: append a structured boundary note to every workspace-bound guard rejection (`working_dir could not be resolved`, `working_dir is outside`, `path outside working dir`, `path traversal detected`). SSRF errors stay short and direct so the model doesn't try to "phrase around" them. Existing `2>/dev/null` allow-list and benign device passthrough from the previous commit remain. - `nanobot/agent/tools/filesystem.py`: append the same boundary note to the `outside allowed directory` PermissionError so read_file / write_file / list_dir errors give the LLM the same explicit hint. Tests ----- - `tests/utils/test_workspace_violation_throttle.py` (new): signature collapses across read_file/exec/python -c against the same path, different paths get independent budgets, escalation only fires after the third attempt. - `tests/agent/test_runner.py`: - `test_runner_does_not_abort_on_workspace_violation_anymore` -- v2 contract: filesystem PermissionError is now soft, runner moves to the next iteration and finalizes cleanly. - `test_is_ssrf_violation_remains_fatal` + the existing `test_runner_aborts_on_ssrf_violation` -- SSRF still aborts on the first attempt. - `test_runner_lets_llm_recover_from_shell_guard_path_outside` -- end to end recovery from `path outside working dir`. - `test_runner_throttles_repeated_workspace_bypass_attempts` -- four bypass attempts against the same outside target produce at least one `workspace_violation_escalated` event and the run completes naturally without aborting the turn. - The two `_execute_tools` direct-call tests now pass the new workspace_violation_counts dict. - `tests/tools/test_tool_validation.py`: relax three `==` assertions to `startswith` + "hard policy boundary" substring check to match the new structured error messages. - `tests/tools/test_exec_security.py` keeps the prior `2>/dev/null` regression and the `> /etc/issue` negative case from the previous commit on this branch -- they still pass under the new policy. Coverage status: full pytest 2648 passed / 2 skipped (was 2638 / 2 on origin/main). Ruff is clean for every file touched in this commit. Co-authored-by: Cursor <cursoragent@cursor.com>

🐈 nanobot is an open-source and ultra-lightweight AI agent in the spirit of OpenClaw, Claude Code, and Codex. It keeps the core agent loop small and readable while still supporting chat channels, memory, MCP and practical deployment paths, so you can go from local setup to a long-running personal agent with minimal overhead.

📢 News

- 2026-04-29 🚀 Released v0.1.5.post3 — Smarter threads on Feishu, Discord, Slack, and Teams; DeepSeek-V4; Hugging Face & Olostep; choices,

/history, and steadier long chats. Please see release notes for details. - 2026-04-28 🌐 Olostep web search, Hugging Face provider, safer workspace-tool interruptions.

- 2026-04-27 💬

/historycommand, smarter session replay caps, smoother Discord / Slack threads. - 2026-04-26 🧭 Natural cron reminders, thread-aware restarts, safer local provider and shell behavior.

- 2026-04-25 🧩

ask_userchoices, macOS LaunchAgent deployment, MSTeams stale-reference cleanup. - 2026-04-24 🎥 Video attachments for channels, DeepSeek thinking control, faster document startup.

- 2026-04-23 🧵 Discord thread sessions, Telegram inline buttons, structured tool progress updates.

- 2026-04-22 🔎 GitHub Copilot GPT-5 / o-series support, configurable web fetch, WebUI image uploads.

- 2026-04-21 🚀 Released v0.1.5.post2 — Windows & Python 3.14 support, Office document reading, SSE streaming for the OpenAI-compatible API, and stronger reliability across sessions, memory, and channels. Please see release notes for details.

- 2026-04-20 🎨 Kimi K2.6 support, Telegram long-message split, WebUI typography & dark-mode polish.

- 2026-04-19 🌐 WebUI i18n locale switcher, atomic session writes with auto-repair.

- 2026-04-18 🧪 Initial WebUI chat, smarter setup wizard menus, WebSocket multi-chat multiplexing.

- 2026-04-17 🪟 Windows & Python 3.14 CI, Dream line-age memory, email self-loop guard.

- 2026-04-16 📡 SSE streaming for OpenAI-compatible API, Discord channel allow-list.

- 2026-04-15 🎛️ LM Studio & nullable API keys, MiniMax thinking endpoint, runtime SelfTool.

- 2026-04-14 🚀 Released v0.1.5.post1 — Dream skill discovery, mid-turn follow-up injection, WebSocket channel, and deeper channel integrations. Please see release notes for details.

- 2026-04-13 🛡️ Agent turn hardened — user messages persisted early, auto-compact skips active tasks.

- 2026-04-12 🔒 Lark global domain support, Dream learns discovered skills, shell sandbox tightened.

- 2026-04-11 ⚡ Context compact shrinks sessions on the fly; Kagi web search; QQ & WeCom full media.

Earlier news

- 2026-04-10 📓 Notebook editing tool, multiple MCP servers, Feishu streaming & done-emoji.

- 2026-04-09 🔌 WebSocket channel, unified cross-channel session,

disabled_skillsconfig. - 2026-04-08 📤 API file uploads, OpenAI reasoning auto-routing with Responses fallback.

- 2026-04-07 🧠 Anthropic adaptive thinking, MCP resources & prompts exposed as tools.

- 2026-04-06 🛰️ Langfuse observability, unified Whisper transcription, email attachments.

- 2026-04-05 🚀 Released v0.1.5 — sturdier long-running tasks, Dream two-stage memory, production-ready sandboxing and programming Agent SDK. Please see release notes for details.

- 2026-04-04 🚀 Jinja2 response templates, Dream memory hardened, smarter retry handling.

- 2026-04-03 🧠 Xiaomi MiMo provider, chain-of-thought reasoning visible, Telegram UX polish.

- 2026-04-02 🧱 Long-running tasks run more reliably — core runtime hardening.

- 2026-04-01 🔑 GitHub Copilot auth restored; stricter workspace paths; OpenRouter Claude caching fix.

- 2026-03-31 🛰️ WeChat multimodal alignment, Discord/Matrix polish, Python SDK facade, MCP and tool fixes.

- 2026-03-30 🧩 OpenAI-compatible API tightened; composable agent lifecycle hooks.

- 2026-03-29 💬 WeChat voice, typing, QR/media resilience; fixed-session OpenAI-compatible API.

- 2026-03-28 📚 Provider docs refresh; skill template wording fix.

- 2026-03-27 🚀 Released v0.1.4.post6 — architecture decoupling, litellm removal, end-to-end streaming, WeChat channel, and a security fix. Please see release notes for details.

- 2026-03-26 🏗️ Agent runner extracted and lifecycle hooks unified; stream delta coalescing at boundaries.

- 2026-03-25 🌏 StepFun provider, configurable timezone, Gemini thought signatures.

- 2026-03-24 🔧 WeChat compatibility, Feishu CardKit streaming, test suite restructured.

- 2026-03-23 🔧 Command routing refactored for plugins, WhatsApp/WeChat media, unified channel login CLI.

- 2026-03-22 ⚡ End-to-end streaming, WeChat channel, Anthropic cache optimization,

/statuscommand. - 2026-03-21 🔒 Replace

litellmwith nativeopenai+anthropicSDKs. Please see commit. - 2026-03-20 🧙 Interactive setup wizard — pick your provider, model autocomplete, and you're good to go.

- 2026-03-19 💬 Telegram gets more resilient under load; Feishu now renders code blocks properly.

- 2026-03-18 📷 Telegram can now send media via URL. Cron schedules show human-readable details.

- 2026-03-17 ✨ Feishu formatting glow-up, Slack reacts when done, custom endpoints support extra headers, and image handling is more reliable.

- 2026-03-16 🚀 Released v0.1.4.post5 — a refinement-focused release with stronger reliability and channel support, and a more dependable day-to-day experience. Please see release notes for details.

- 2026-03-15 🧩 DingTalk rich media, smarter built-in skills, and cleaner model compatibility.

- 2026-03-14 💬 Channel plugins, Feishu replies, and steadier MCP, QQ, and media handling.

- 2026-03-13 🌐 Multi-provider web search, LangSmith, and broader reliability improvements.

- 2026-03-12 🚀 VolcEngine support, Telegram reply context,

/restart, and sturdier memory. - 2026-03-11 🔌 WeCom, Ollama, cleaner discovery, and safer tool behavior.

- 2026-03-10 🧠 Token-based memory, shared retries, and cleaner gateway and Telegram behavior.

- 2026-03-09 💬 Slack thread polish and better Feishu audio compatibility.

- 2026-03-08 🚀 Released v0.1.4.post4 — a reliability-packed release with safer defaults, better multi-instance support, sturdier MCP, and major channel and provider improvements. Please see release notes for details.

- 2026-03-07 🚀 Azure OpenAI provider, WhatsApp media, QQ group chats, and more Telegram/Feishu polish.

- 2026-03-06 🪄 Lighter providers, smarter media handling, and sturdier memory and CLI compatibility.

- 2026-03-05 ⚡️ Telegram draft streaming, MCP SSE support, and broader channel reliability fixes.

- 2026-03-04 🛠️ Dependency cleanup, safer file reads, and another round of test and Cron fixes.

- 2026-03-03 🧠 Cleaner user-message merging, safer multimodal saves, and stronger Cron guards.

- 2026-03-02 🛡️ Safer default access control, sturdier Cron reloads, and cleaner Matrix media handling.

- 2026-03-01 🌐 Web proxy support, smarter Cron reminders, and Feishu rich-text parsing improvements.

- 2026-02-28 🚀 Released v0.1.4.post3 — cleaner context, hardened session history, and smarter agent. Please see release notes for details.

- 2026-02-27 🧠 Experimental thinking mode support, DingTalk media messages, Feishu and QQ channel fixes.

- 2026-02-26 🛡️ Session poisoning fix, WhatsApp dedup, Windows path guard, Mistral compatibility.

- 2026-02-25 🧹 New Matrix channel, cleaner session context, auto workspace template sync.

- 2026-02-24 🚀 Released v0.1.4.post2 — a reliability-focused release with a redesigned heartbeat, prompt cache optimization, and hardened provider & channel stability. See release notes for details.

- 2026-02-23 🔧 Virtual tool-call heartbeat, prompt cache optimization, Slack mrkdwn fixes.

- 2026-02-22 🛡️ Slack thread isolation, Discord typing fix, agent reliability improvements.

- 2026-02-21 🎉 Released v0.1.4.post1 — new providers, media support across channels, and major stability improvements. See release notes for details.

- 2026-02-20 🐦 Feishu now receives multimodal files from users. More reliable memory under the hood.

- 2026-02-19 ✨ Slack now sends files, Discord splits long messages, and subagents work in CLI mode.

- 2026-02-18 ⚡️ nanobot now supports VolcEngine, MCP custom auth headers, and Anthropic prompt caching.

- 2026-02-17 🎉 Released v0.1.4 — MCP support, progress streaming, new providers, and multiple channel improvements. Please see release notes for details.

- 2026-02-16 🦞 nanobot now integrates a ClawHub skill — search and install public agent skills.

- 2026-02-15 🔑 nanobot now supports OpenAI Codex provider with OAuth login support.

- 2026-02-14 🔌 nanobot now supports MCP! See MCP section for details.

- 2026-02-13 🎉 Released v0.1.3.post7 — includes security hardening and multiple improvements. Please upgrade to the latest version to address security issues. See release notes for more details.

- 2026-02-12 🧠 Redesigned memory system — Less code, more reliable. Join the discussion about it!

- 2026-02-11 ✨ Enhanced CLI experience and added MiniMax support!

- 2026-02-10 🎉 Released v0.1.3.post6 with improvements! Check the updates notes and our roadmap.

- 2026-02-09 💬 Added Slack, Email, and QQ support — nanobot now supports multiple chat platforms!

- 2026-02-08 🔧 Refactored Providers—adding a new LLM provider now takes just 2 simple steps! Check here.

- 2026-02-07 🚀 Released v0.1.3.post5 with Qwen support & several key improvements! Check here for details.

- 2026-02-06 ✨ Added Moonshot/Kimi provider, Discord integration, and enhanced security hardening!

- 2026-02-05 ✨ Added Feishu channel, DeepSeek provider, and enhanced scheduled tasks support!

- 2026-02-04 🚀 Released v0.1.3.post4 with multi-provider & Docker support! Check here for details.

- 2026-02-03 ⚡ Integrated vLLM for local LLM support and improved natural language task scheduling!

- 2026-02-02 🎉 nanobot officially launched! Welcome to try 🐈 nanobot!

💡 Key Features of nanobot

- Ultra-lightweight: stable long-running agent behavior with a small, readable core.

- Research-ready: the codebase is intentionally simple enough to study, modify, and extend.

- Practical: chat channels, API, memory, MCP, and deployment paths are already built in.

- Hackable: you can start fast, then go deeper through repo docs instead of a monolithic landing page.

📦 Install

Important

If you want the newest features and experiments, install from source.

If you want the most stable day-to-day experience, install from PyPI or with

uv.

Install from source

git clone https://github.com/HKUDS/nanobot.git

cd nanobot

pip install -e .

Install with uv

uv tool install nanobot-ai

Install from PyPI

pip install nanobot-ai

🚀 Quick Start

1. Initialize

nanobot onboard

2. Configure (~/.nanobot/config.json)

Configure these two parts in your config (other options have defaults). Add or merge the following blocks into your existing config instead of replacing the whole file.

Set your API key (e.g. OpenRouter, recommended for global users):

{

"providers": {

"openrouter": {

"apiKey": "sk-or-v1-xxx"

}

}

}

Set your model (optionally pin a provider — defaults to auto-detection):

{

"agents": {

"defaults": {

"provider": "openrouter",

"model": "anthropic/claude-opus-4-6"

}

}

}

3. Chat

nanobot agent

- Want different LLM providers, web search, MCP, security settings, or more config options? See Configuration

- Want to run nanobot in chat apps like Telegram, Discord, WeChat or Feishu? See Chat Apps

- Want Docker or Linux service deployment? See Deployment

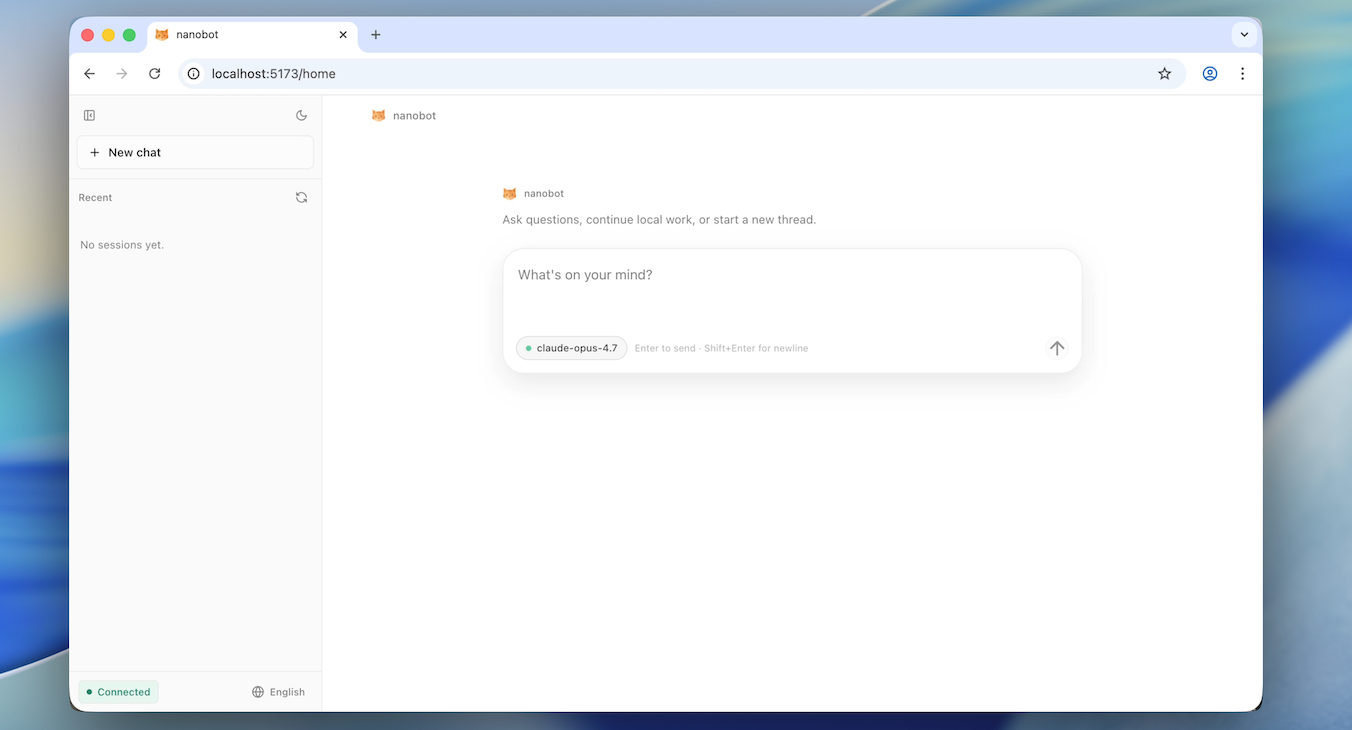

🧪 WebUI (Development)

Note

The WebUI development workflow currently requires a source checkout and is not yet shipped together with the official packaged release. See WebUI Document for full WebUI development docs and build steps.

1. Enable the WebSocket channel in ~/.nanobot/config.json

{ "channels": { "websocket": { "enabled": true } } }

2. Start the gateway

nanobot gateway

3. Start the webui dev server

cd webui

bun install

bun run dev

🏗️ Architecture

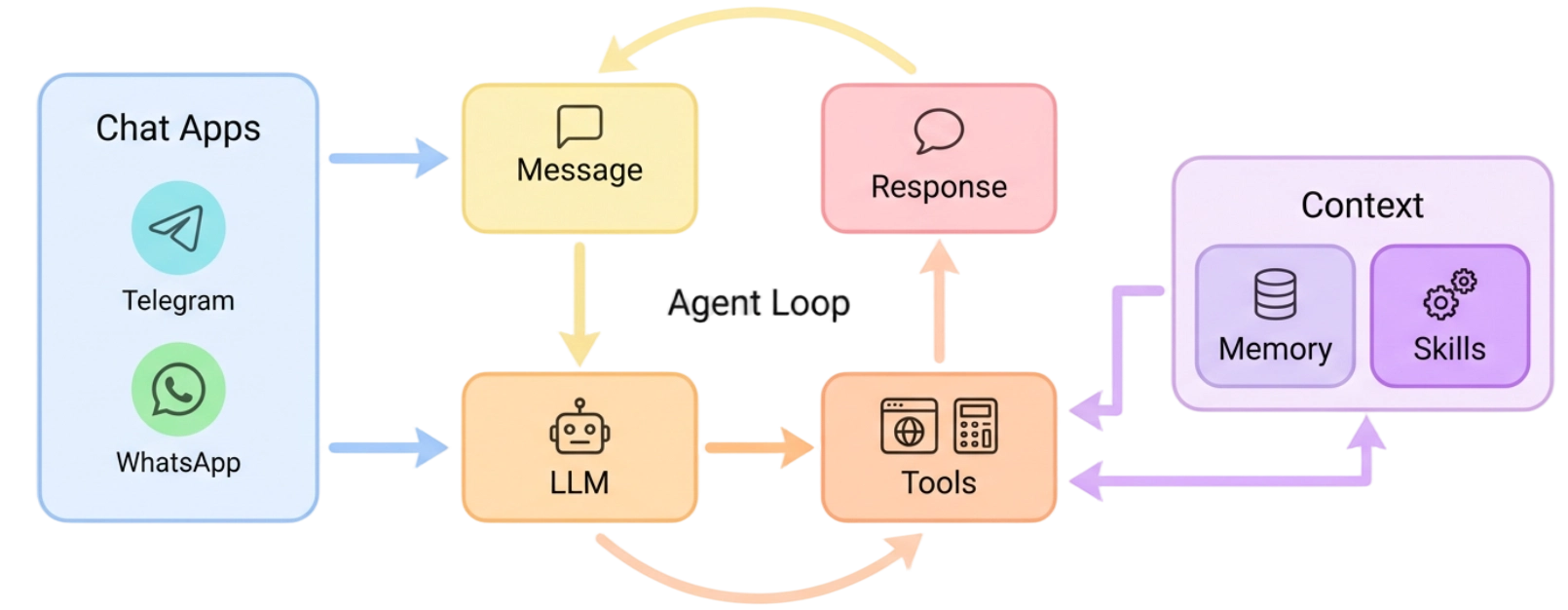

🐈 nanobot stays lightweight by centering everything around a small agent loop: messages come in from chat apps, the LLM decides when tools are needed, and memory or skills are pulled in only as context instead of becoming a heavy orchestration layer. That keeps the core path readable and easy to extend, while still letting you add channels, tools, memory, and deployment options without turning the system into a monolith.

✨ Features

📈 24/7 Real-Time Market Analysis |

🚀 Full-Stack Software Engineer |

📅 Smart Daily Routine Manager |

📚 Personal Knowledge Assistant |

|---|---|---|---|

| Discovery • Insights • Trends | Develop • Deploy • Scale | Schedule • Automate • Organize | Learn • Memory • Reasoning |

📚 Docs

Browse the repo docs for the latest features and GitHub development version, or visit nanobot.wiki for the stable release documentation.

- Talk to your nanobot with familiar chat apps: Chat Apps

- Configure providers, web search, MCP, and runtime behavior: Configuration

- Integrate nanobot with local tools and automations: OpenAI-Compatible API · Python SDK

- Run nanobot with Docker or as a Linux service: Deployment

🤝 Contribute & Roadmap

PRs welcome! The codebase is intentionally small and readable. 🤗

Branching Strategy

| Branch | Purpose |

|---|---|

main |

Stable releases — bug fixes and minor improvements |

nightly |

Experimental features — new features and breaking changes |

Unsure which branch to target? See CONTRIBUTING.md for details.

Roadmap — Pick an item and open a PR!

- Multi-modal — See and hear (images, voice, video)

- Long-term memory — Never forget important context

- Better reasoning — Multi-step planning and reflection

- More integrations — Calendar and more

- Self-improvement — Learn from feedback and mistakes

Contact

This project was started by Xubin Ren as a personal open-source project and continues to be maintained in an individual capacity using personal resources, with contributions from the open-source community. Feel free to contact xubinrencs@gmail.com for questions, ideas, or collaboration.

Contributors

⭐ Star History

Thanks for visiting ✨ nanobot!